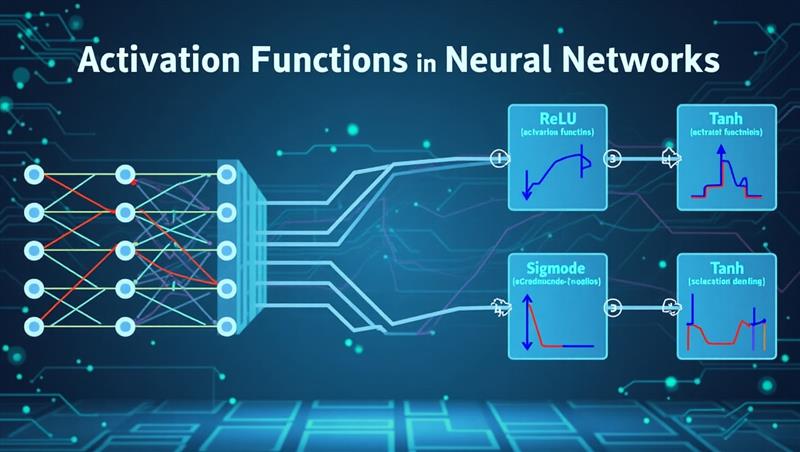

Activation functions in neural network

Jul 31, 2025 Vikas Suhag

Activation functions in neural network

Binary activation function is used for only binary classification.

Linear activation function is not usable. Since it does not change anything from input layer to hidden layers. It can not be used for backpropagation.

So only non-linear activation functions should be used which supports backpropagation. Backpropagation help in readjustment of weight to improve prediction. We can use multiple layers in non-linear functions.

10 Non-linear Neural Networks Activation functions

- Sigmoid /Logistic- It takes input and output only in range of 0 to 1. It is used for predicting probabilities. Larger the input(more positive) more closer the output to 1.0 and smaller the input(more negative), closer the output to 0.0.

- Tanh function (Hyperbolic Tangent) - Output range is -1 to +1, more positive values will tends towards +1 and smaller values correspond to -1. It is usually used in hidden layers since its mean for hidden layer comes out to be near to 0 and help in centring data for next hidden layer. Sigmoid and Tanh both suffer from vanishing gradient problem.

- ReLU function - Rectified Linear Unit- it does not activate all neurons at same time. It suffers from Dying ReLU problem during which all negative values become 0 during backpropagation.

- Leaky ReLU functions - Solves the dead neuron problems but inconsistency existing while handling negative values.

- Parametric ReLU function - it is used when Leaky ReLU fail to solve dead neurons issue.

- Exponential Linear Units (ELUs) Function - solves the problems of ReLU, Leaky ReLU but computationally expansive and suffer from Exploding gradient problem. Major problem lies in handling negative values.

- Softmax function - It is combination of multiple sigmoid functions and usually used in last layer for multi-class classification.

- Swish - a more refined solution for ReLU developed by Google. It is used only when number of hidden layers is greater than 40.

- GELU (Gaussian Error Linear Unit)

- SELU (Scaled Exponential Linear Unit)

Most Viewed articles

Subscribe to Email Updates

Content License

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

© 2026 Learn with Vikas Suhag. All Rights Reserved.